Kubernetes Hands-On for the CKA Exam: Exploring Pods, ReplicaSet and Deployments

Introduction #

This article is the first of a series of hands-on practices I’ve created while preparing for the CKA exam.

It aims to explore the ownership relations between pods, ReplicaSets and Deployments, which are the building blocks of applications running on Kubernetes.

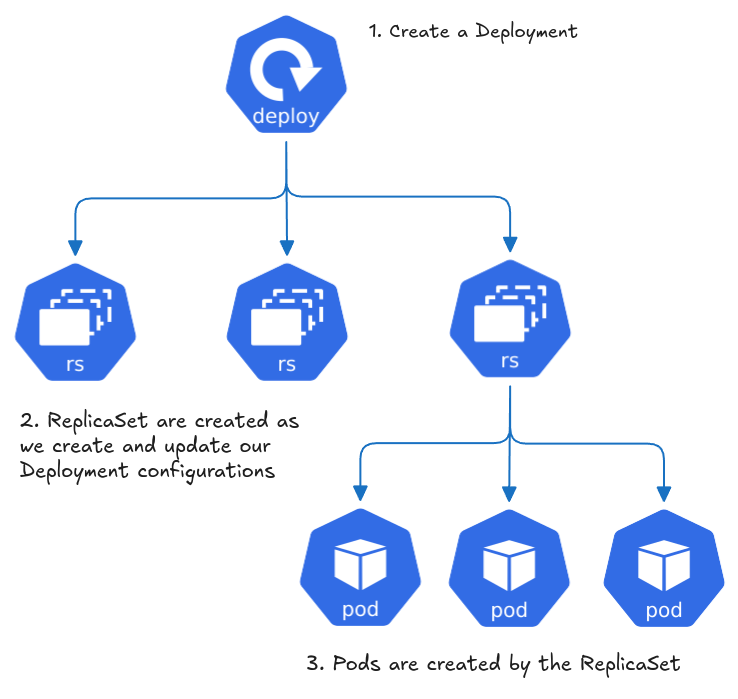

The usual workflow of creating workloads in Kubernetes is to create a Deployment which manages multiple ReplicaSets which in turn creates and manages the desired pods.

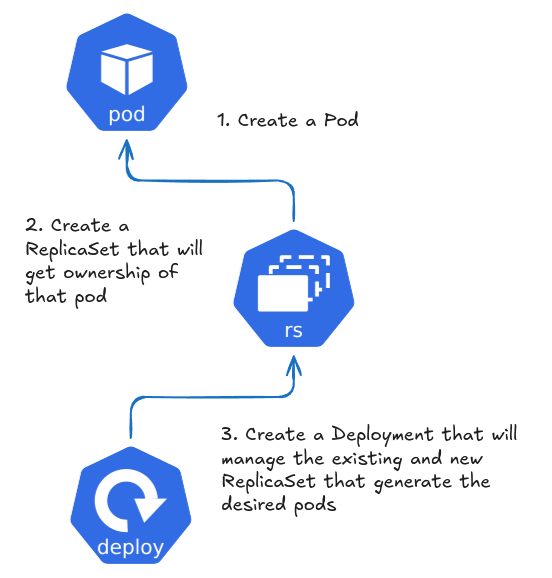

In this practice, we are going to explore these ownership relationships by doing the opposite path of the usual workflow by first creating the pod, then a ReplicaSet that will manage that existing pod and at last a Deployment to manage that existing ReplicaSet.

Practice Environment #

If you don’t already have access to a Kubernetes cluster, you can set up a cluster on Play with Kubernetes to follow along.

Hands-On #

1. Creating the Pod #

The first step is to create a single pod running Nginx, pay attention to the labels defined in the YAML, these are going to be used as selectors on the ReplicaSet:

apiVersion: v1

kind: Pod

metadata:

name: nginx-pod

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:latest

ports:

- containerPort: 80

Create a file and apply it. List the pods and verify it’s running:

$ kubectl apply -f pod.yaml

pod/nginx-pod created

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-pod 1/1 Running 0 9s

At this point we can look on owner references, which should be empty:

$ kubectl get pod nginx-pod -o jsonpath='{.metadata.ownerReferences[0].name}'

nginx-rs

2. Getting owned by a ReplicaSet #

The next step is to create a ReplicaSet with a selector matching the pod’s labels. As soon as it’s created, it’ll get ownership of that pod. Pay attention to the number of replica:

apiVersion: apps/v1

kind: ReplicaSet

metadata:

name: nginx-rs

spec:

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:latest

ports:

- containerPort: 80

Create a file for the ReplicaSet and apply if. List the pods and verify that there’s only a new pod running:

$ kubectl apply -f replicaset.yaml

replicaset.apps/nginx-rs created

$ kubectl get rs

NAME DESIRED CURRENT READY AGE

nginx-rs 2 2 2 6s

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-pod 1/1 Running 0 3m15s

nginx-rs-5t97q 1/1 Running 0 9s

Did it work? If you’re just starting out with Kubernetes you may not be sure.

Remember the number of replicas in spec.replicas. If the existing pod was not matched with the ReplicaSet just created there would be two new pods on the cluster. That is, the existing pod is now owned by the nginx-rs ReplicaSet.

We can also look at the owner references again, which now should mention the nginx-rs ReplicaSet:

$ kubectl get pod nginx-pod -o jsonpath='{.metadata.ownerReferences[0].name}'

nginx-rs

Another way to achieve this is to describe the pod and look under Controlled By:

kubectl describe pod nginx-pod | grep Contro

Controlled By: ReplicaSet/nginx-rs

3. Managing everything with Deployment #

The last step is to create a Deployment to manage the existing ReplicaSet:

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

spec:

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:latest

Create a file for the Deployment and apply it.

$ kubectl apply -f deployment.yaml

deployment.apps/nginx-deployment created

After creating our Deployment we list the ReplicaSet to find out a new ReplicaSet has been created and by that ReplicaSet a new set of pods:

$ kubectl apply -f deployment.yaml

deployment.apps/nginx-deployment created

$ kubectl get rs

NAME DESIRED CURRENT READY AGE

nginx-deployment-96b9d695 2 2 2 4s

nginx-rs 2 2 2 14m

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-deployment-96b9d695-4jvks 1/1 Running 0 7s

nginx-deployment-96b9d695-nwksk 1/1 Running 0 7s

nginx-pod 1/1 Running 0 18m

nginx-rs-5t97q 1/1 Running 0 15m

Compare the labels of the pod and ReplicaSet manifests created and you’ll notice the pod has the label app: nginx but the ReplicaSet does not have any label.

Since the ReplicaSet labels and the Deployment selectors did not match, the Deployment did not claim ownership of the ReplicaSet.

We must configure these same labels on the ReplicaSet so as to match the selector on the Deployment manifest.

That being said, we can delete it without removing our current ReplicaSet and Pod:

$ kubectl delete -f deployment.yaml

deployment.apps "nginx-deployment" deleted

$ kubectl get rs

NAME DESIRED CURRENT READY AGE

nginx-rs 2 2 2 16m

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-pod 1/1 Running 0 19m

nginx-rs-5t97q 1/1 Running 0 16m

Now the updated ReplicaSet with the labels:

apiVersion: apps/v1

kind: ReplicaSet

metadata:

name: nginx-rs

labels: # notice we've added the labels

app: nginx

spec:

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:latest

ports:

- containerPort: 80

Update the ReplicaSet file and update it, there should be no changes on the cluster:

$ kubectl apply -f replicaset-fixed.yaml

replicaset.apps/nginx-rs configured

$ kubectl get rs

NAME DESIRED CURRENT READY AGE

nginx-rs 2 2 2 18m

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-pod 1/1 Running 0 21m

nginx-rs-5t97q 1/1 Running 0 18m

Apply the Deployment again:

$ kubectl apply -f deployment.yaml

deployment.apps/nginx-deployment created

$ kubectl get rs

NAME DESIRED CURRENT READY AGE

nginx-rs 2 2 2 19m

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-pod 1/1 Running 0 22m

nginx-rs-5t97q 1/1 Running 0 19m

Now no new ReplicaSets, nor pods, as expected. As we did before the ReplicaSet and the pods, we can verify the owner reference of the ReplicaSet:

$ kubectl get rs nginx-rs -o jsonpath='{.metadata.ownerReferences[0].name}'

nginx-deployment

$ kubectl describe rs nginx-rs | grep Controlled

Controlled By: Deployment/nginx-deployment

Update the number of replicas of the Deployment and you’ll notice it updates the number of the replicas on the ReplicaSet, as expected:

$ kubectl edit deployment nginx-deployment

deployment.apps/nginx-deployment edited

$ kubectl get rs

NAME DESIRED CURRENT READY AGE

nginx-rs 3 3 2 22m

What happens if we delete the Deployment now?

kubectl delete -f deployment.yaml

deployment.apps "nginx-deployment" deleted

kubectl get rs

No resources found in default namespace.

kubectl get pods

No resources found in default namespace.

Since that Deployment now owns the ReplicaSet, everything is deleted from the cluster.

Follow Up #

A great way to learn is to apply your knowledge, as you just did now by following up this practice. But an even better way is to evaluate and think critically about what you are studying.

Try to think of new scenarios regarding the concepts practiced. Then test them out in a practice cluster. For example, what would happen:

- If we change the labels of the ReplicaSet or pod after ownership?

- If we apply a Deployment in a properly labeled ReplicaSet but the container spec differs?

- If we create a new with the matching labels to an existing Deployment?